Predictive Processing: A Brief Introduction

Your brain may be an organ of prediction.

Writing an introduction to the ideas of predictive processing is no easy task. I have chosen to present them in a manner that is as digestible as I am able to make them. This is not a rigorous text, but rather a simple overview of what I personally consider to be the most important ideas for a newcomer to acquaint themselves with. I rely on analogies, metaphors, and other poetic devices for the simple reason that they are effective ways of communicating complex ideas. With these caveats in mind, I welcome you to explore what I consider to be the most important and interesting recent development in the study of mind and behavior.

Between your ears rests a protein-rich blob that has been fine-tuned across millions of years of evolution for a singular purpose: prediction.

That’s the shocking thesis supported by increasingly-numerous experimental observations and a novel interdisciplinary framework in the cognitive sciences: predictive processing is a unified theory of brain function. It explains memory, attention, consciousness, learning, motivation, perception, action, and any number of phenomena associated with the workings of brain cells.

That a single framework should be able to explain such a beast of complexity as the brain is to many laypeople and scientists alike too much to swallow. It defies common sense. The brain is messy and we should expect the same of its associated models. Yet, there’s precedence for such hubris. Darwin’s theory of evolution emerged as an all-encompassing and boldly unifying framework for biology at large. Philosopher Daniel Dennett has referred to evolution as a “universal acid”, eating its way through every field it touches1. It’s such a powerful idea that there’s nothing to be done but to succumb to its sheer explanatory force. That’s the cognitive niche proponents of predictive processing envision. They recognize in it a potential to forever transform the way we think about mind and behavior the same way evolution transformed biology.

The Growth of Predictive Processing

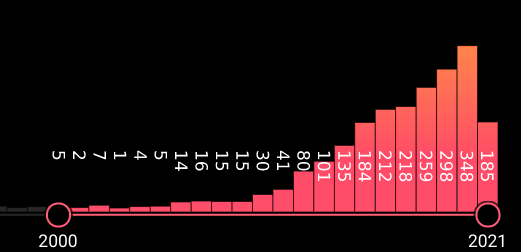

PubMed is a database for biomedical academic papers. If we enter terms relevant to predictive processing2, we get this nice illustration of the number of papers on the topic published year by year:

As you can see, the growth in interest has been quite explosive.

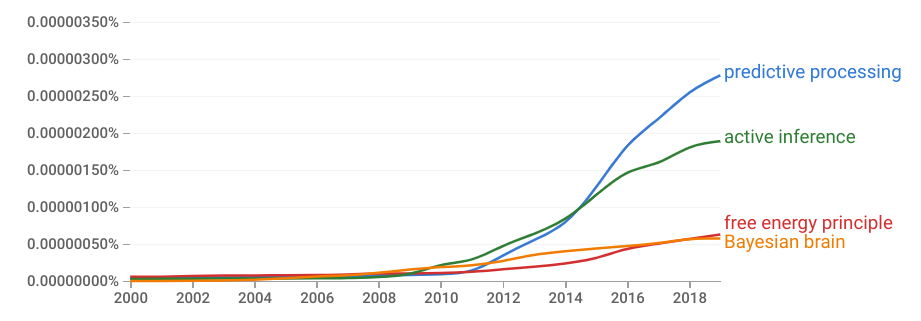

For more general interest, we can use Google’s Ngram viewer3. This is a tool that keeps track of the relative popularity of words, terms, and expressions in English-language books over time. We can see that things really took off around 2010, which makes sense in terms of the PubMed graph above: this is when people, scientists and laypeople alike, began taking serious notice of predictive processing.

I find that it’s often useful to look at graphs like these when thinking about how something has changed over time. It’s also useful for comparison purposes: ‘predictive processing’ and ‘active inference’ are much more popular than the more technical terms ‘free energy principle’ and ‘Bayesian brain’.

A brief glossary:

Predictive processing: an umbrella term for a family of related theories focused on the role of the brain as an organ of prediction.

Active inference: a theory of cognition arguing that action is used to reduce our uncertainty about future outcomes relative to an internal model of ourselves and the world.

Free energy principle: a principle (not a theory) of brain function based on mathematical arguments. Out of all possible states we can inhabit, few are compatible with life. We must, then, minimize the time spent in surprising states. This is equivalent to continuously predicting what will happen next.

Bayesian brain: a family of theories arguing that perception (and less commonly, action) can be understood via the process of (approximate) Bayesian inference.

Predictive coding: a compression algorithm that has been used to describe neural coding. Input that can be predicted is suppressed such that only surprising (novel) information is processed.

Of these, predictive coding has the longest history. It was invented for data compression in the 50s and has been used as a model of perception in neurobiology since the 80s4. As such, many neuroscientists tend to use ‘predictive coding’ as a synonym for ‘predictive processing’. I decided not to include it in the graph because the same term is used in different contexts and its inclusion would make the graph needlessly confusing.

Active inference5 and the free energy principle6 were both introduced by neuroscientist Karl Friston. Predictive processing was introduced as an umbrella term by philosopher Andy Clark after he discovered Friston’s ideas and recognized their vast potential7.

With this out of the way, we are ready to delve deeper into the topic of predictive processing.

The Flow of Predictions: Vision

It’s helpful to think about predictive processing in terms of the flow of information.

In addition to the passive incoming flow of sensory information, there’s an active anticipatory flow travelling in the opposite direction. This is the flow of predictions. As these streams crash into each other, a comparison is made. What ultimately leaves a mark is the difference between the two: prediction errors. When you subtract expected information from actual information, what you are left with is unexpected information. This is the portion of the incoming flow which you failed to anticipate. It makes sense, then, that this newsworthy information is what you’ll want to remember. According to the predictive processing framework, the main purpose of the brain is to keep such surprises at a minimum.

What you see around you is in a large part the result of an adaptive perceptual filter, shaped by experience. Prediction errors are used to update this filter.

Traditionally, this process is pictured in terms of hierarchy. Higher-level units in the brain predict the activity of lower-level units. In turn, the lower-level units provide error feedback to higher levels. This hierarchical organization is very useful. Low levels can concentrate their efforts on small “patches” of reality. If we go up a level, a collection of small patches is given the same treatment: as a single patch. In terms of perception, we speak of receptive fields. In early stages of the visual processing hierarchy, neurons focus on small receptive fields—patches of visual reality—that correspond to the activity registered by small patches of your retina. At higher levels, the receptive fields are larger. The hippocampus can be said to represent the top of the visual hierarchy, processing information at highest spatiotemporal level available to us.

Filters capable of processing information at higher and higher scales in time and in space feed into each other in an impressive hierarchy. Low-level errors can impact high-level prediction units.

The illustration below shows the flow of visual information in the macaque brain8. The hippocampus—HC—sits on top. Directly below it is the entorhinal cortex, EC. At the bottom we have retinal ganglion cells (RGC) and the lateral geniculate nucleus of the thalamus (LGN). To me, this flow of information is awe-inspiring. Neurons collaborating at increasingly-higher levels are able to perceive reality at increasingly-higher spatiotemporal resolutions. From a lone photon hitting a photoreceptor in your retina to the activation of neural clusters in the hippocampus there’s a steady predictive flow of information.

Your primary visual cortex (V1) is located at the back of your brain. Only a small portion of the information it processes is received from bottom-up sources. The rest—also the majority—flows from above in the shape of top-down predictions.

Briefly, take a look around you. Isn’t it wonderful to know that most of your perceptual experience reflects predictive neural activity? Neuroscientist Anil Seth has described perception as controlled hallucination, and it’s a fitting metaphor9. Our visual experience is mainly hallucination. But it’s tethered to reality via errors. This continuous flow is quite literally a stream of consciousness.

Neurologist Marcus Raichle made headlines when he pointed out what he referred to as the dark energy of the brain10. Most of the energy consumed by your brain is used to maintain a steady state—a continuous flow—rather than to respond to external input. This should come as no surprise to those who favor the predictive processing framework: when prediction is the sole task of the brain, you’d expect it to spend most of its energy on internal processing. External input is a source of feedback. It’s not the cogs and wheels driving our behavior—it’s the subject of our predictions.

The hollow mask illusion is a great example of the constructive nature of our perception. When we see the hollow inside of a mask, our brains rely more on top-down predictions than bottom-up errors. We ignore the errors in favor of the best explanation our brains can come up with for what we’re seeing: Einstein’s face.

Sufferers of schizophrenia (and in some cases their family members) often fail to see the hollow mask illusion. Rather than Einstein’s face, they see a hollow mask. We will come back to this later.

Visual illusions have for a long time been used to illuminate the constructive nature of perception. British psychiatrist Richard Gregory was an early proponent of this idea11. One of his favorite examples was this:

If you haven’t seen it before, it may take you some time to recognize it for what it is. Predictions flow down and errors are sent back up. At some point, the correct explanation is found. And once you’ve “cracked the code”, it might seem strange to you that you didn’t see it immediately. But that’s how it works. Before you find a suitable interpretation, sensory prediction errors wash over you as your visual processing hierarchy struggles to make sense of its perceptual stream. Yet, when a stable explanation is found it’s easily maintained. However, that’s not always the case.

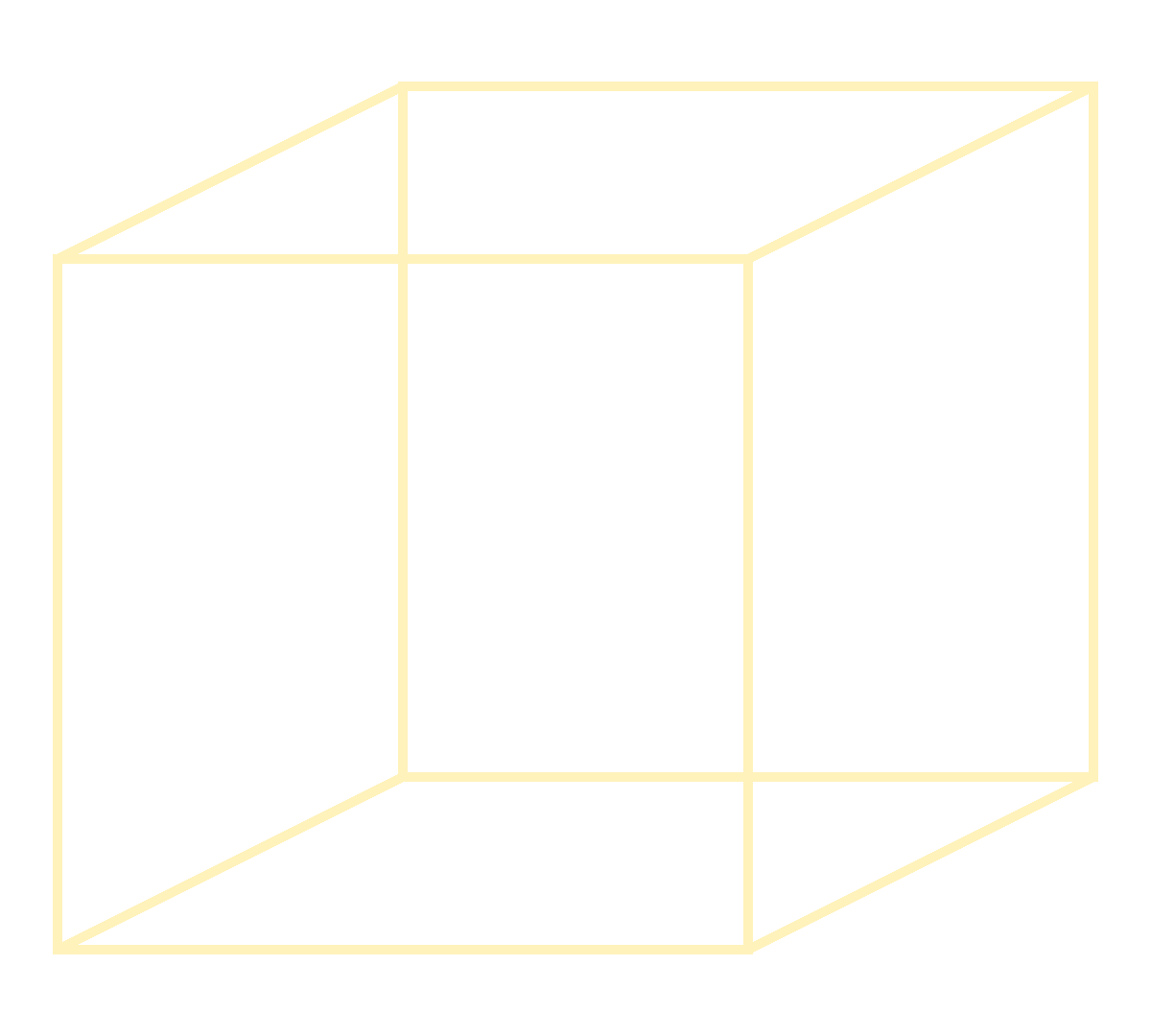

The Necker cube supports two equally likely explanations. Because of this, your perception of it tends to flicker between the two. The stream of predictions and errors becomes restless and there almost seems to be an internal struggle between different neural groups who are both convinced they’ve cracked the code. And that’s precisely what is happening.

Errors and predictions are contained in the very same flow of information. Perception is stabilized by predictions and destabilized by perceived errors. This process ensures that we keep climbing a ladder of explanations: we abandon ill-fitting ones when we come across better ones. This is the cumulative growth of knowledge. If you find that it reminds you of scientific progress, you are not alone. Richard Gregory suggested that the process of perception is just like science. Data is collected and models are refined. There are experiments and theories, all part of a collective attempt to understand the world around us. And in that, the brain is precisely like a scientist.

The Flow of Predictions: Sound

What’s the difference between music and noise? The answer may simply be that the former can be more easily predicted.

I want to to try a little experiment. Listen to the phrase in this video12 a couple of times:

As you become more familiar with a simple spoken phrase, a strange shift occurs: it transforms into music. Once you became better able to predict it, it turned into song.

This is, perhaps, the reason why we love music in the first place: it feeds our predictive organ. As errors and predictions flow up and down our cortical hierarchies, we become increasingly better at finding out what will come next. And the feeling of our predictions improving is quite pleasant. This is the reason why music tends to be repetitive: it makes prediction easier.

The same is true of stories. A good story—and a good song—provides us with just enough errors to capture our attention. Too few errors, and we’d lose interest. Too many, and we’re left confused. Music and stories and all sorts of art aim for that sweet spot in-between the two. Developmental psychologist Celeste Kidd has shown that infants allocate their attentional resources to stimuli that hit that sweet spot13. She fittingly refers to this as the Goldilocks zone: not too simple nor too complex.

There are auditory illusions similar to that of the dalmatian (did you see it?) earlier. Sine-wave speech is a common example:

Once we find the right explanation—the sentence masked by the sine wave—our auditory perception of the sine-wave speech segment becomes stable and coherent.

When you think about this in terms of the flow of predictions, it makes a lot of sense. As I’m writing this, you are making predictions about what I’m going to write next. If I write something utterly unexpected, you’ll notice elephant chocolate. You will be surprised. And you can’t be surprised unless you already have expectations ready to be violated. Inside your brain, information flows up and down continuously while you read this as you implicitly (or explicitly) compare what you expect to read with what you actually read.

You are at all times engaged in a recursive process of sense-making, predicting the future and investigating sources of error. “Wait,” you might say. “Didn’t you say earlier that we try to minimize surprise? Why, then, do we seek it out?” That’s an excellent question! The answer is simple: enduring a small error today reduces the risk of suffering a grave error tomorrow. It’s analogous to spending money in order to make money: we expect that it will pay off eventually.

This steady stream of predictions and errors is the stuff from which your conscious experience of reality is made. It’s a controlled hallucination. A waking dream. A multisensory simulation of the world and of yourself, carefully cross-referenced with the real thing by way of errors.

Living Things Can’t Stand Still

As early as 1956, British physicist Donald MacKay proposed an information-flow model of human behavior14:

A statistically self-organizing system is described in which not only normal homeostatic behaviour but also such activities as the invention of fruitful hypotheses, the imagination of fictitious situations, and the like would find a natural place.

—Donald MacKay (1956)

A key observation here is the contrast he placed on the difference between his information-flow model and homeostasis. Homeostasis, meaning “as if standing still”, implies a perpetual return to a fixed state. This thermostat-like idea was borne from the notion that organisms are inherently reactive rather than proactive. It fails to capture what is obvious to every living thing: change is an essential part of life. In order to keep up with a changing world, we must perpetually change ourselves. And the only way to make sure that we are on the right track is to continuously predict what will come next.

The moment we truly stand still, we are no longer living.

The same line of thinking that gave us the idea of homeostasis also gave us the idea of reflexes. Take the knee-jerk reflex, for instance. Is it really a reflex? Perhaps not. Your nervous system keeps track of the internal state of your body in much the same way it keeps track of the state of the external world. Based on proprioceptive (sensation of body position) feedback, it updates its model and makes corrections. A swift blow of a hammer to the patellar tendon just below the kneecap can be considered a dose of misinformation: it creates a conflict between expectations and observations. The resulting knee-jerk is an attempted correction.

In Decisions, Uncertainty, and the Brain15, neuroscientist Paul Glimcher argues that there’s no such thing as a reflex. Instead, he promotes an alternative approach to understanding behavior grounded in probability theory and economics.

Unlike the traditional monist approach of classical physiologists, this theoretical strategy does not argue that behavior is the product of incredibly complex sets of interacting reflexes. Instead, it suggests that there are no such things as reflexes. Determinate reflexes are, in this view, an effort to explain a tiny portion of behavior with an inappropriate theory. I mean this in a very real and concrete sense. Reflexes are a model of how sensory signals give rise to motor responses. We now know that the breadth of behavior which these model systems can explain is tiny. At this point in time, reflexes are simply a bad theory, and one that should be discarded.

—Paul W. Glimcher, Decisions, Uncertainty, and the Brain (2003)

While the field Glimcher promotes—neuroeconomics—does not really belong under the umbrella of predictive processing, it shares its philosophical revolt against traditional reaction-based accounts of mind and behavior.

The traditional way of thinking about bodily regulation is, indeed, framed in terms of reaction. But like MacKay argued more than 60 years ago, we are more than fancy thermostats. We anticipate changes before-the-fact. We have to.

Every time you stand up, your blood pressure is adjusted in a compensatory fashion. But it has to be adjusted before you stand up—otherwise you’d faint. Reaction is too slow. You need anticipation. You need prediction.

Neuroscientist Peter Sterling coined the term ‘allostasis’ along with his colleague Joseph Eyer16. It means something like ‘stability through change’, which might sound confusing. Yet, it’s important to distinguish reaction from anticipation. The former is passive, while the latter is active. Allostasis refers to the predictive regulation of physiological variables—such as blood pressure.

Living things must be active. Standing still will result in death and decay. So we all need allostasis—predictive regulation. This is true even of plants17.

Psychologist Lisa Feldman Barrett has suggested that allostasis and predictive processing can even explain emotions18. She refers to allostasis as body budgeting: anticipating bodily demands before they arise. As she sees it, emotions are constructed in order to explain internal changes associated with energy regulation.

Everything you think, feel, and do is a consequence of your brain’s central mission to keep you alive and well by managing your body budget. We don’t experience our every thought, every feeling of anxiety, happiness or anger or awe, every hug we give or receive, every kindness we extend, and every insult we bear as a deposit or withdrawal in our metabolic budgets, but under the hood, that is what’s happening.

—Lisa Feldman Barrett (GQ Interview, 2020)

Theoretical biologist Robert Rosen argued that living things are anticipatory systems. This early variant of predictive processing has much in common with contemporary accounts, and stressed the same basic point: living things rely on internal models in order to get by.

An anticipatory system is a natural system that contains an internal predictive model of itself and of its environment, which allows it to change state at an instant in accord with the model’s predictions pertaining to a later instant.

—Robert Rosen, Ancipatory Systems (1985)

There is also an intellectual heritage from control engineering and cybernetics, dating back to the 1940s, emphasizing the role of recursive state estimation in the stability and control of animals and machines.

‘Recursive state estimation’ might as well be a synonym for predictive processing. It’s a useful expression as it captures a crucial element—recursion. There are plenty of loops involved in the flow of information. Signals are amplified and suppressed in a complex dance of nonlinear interactions. We send something out and we get something back. The difference between what we expected to come back and what really did come back is the only thing in the universe that can keep us grounded to reality. That might sound like an overstatement, but it’s true. Errors keep us informed. They help us stay connected with the world around us. Without them, we are lost.

We need errors because they are the only thing we can rely on in order to make updates. And if we can’t make updates, we can’t keep up. And if we can’t keep up, we die. Cyberneticist W. Ross Ashby put it bluntly19:

The whole function of the brain is summed up in: error-correction.

Errors: A Matter of Precision

Computer scientist Jeff Hawkins used a simple thought experiment to illustrative his ideas in his best-seller, On Intelligence, co-written with Sandra Blakeslee20. Imagine that someone were to move the doorknob in your room. You would notice the change immediately. At first you might just register that something is off, and this is a key observation. That wouldn’t happen if you weren’t implicitly anticipating what would happen next. It wouldn’t happen if your brain weren’t actively predictive.

Prediction errors tend to capture our attention, making us suddenly snap out of whatever it is that we are doing.

According to an experiment published in the Proceedings of the National Academy of Science, we are always situated in the immediate future21. We are living in a world shaped by our expectations, ahead of reality by some crucial milliseconds. This is a necessity, as it takes time for sensory information to propagate along axons and zap across synapses. This means that your perceptual experience of the world is a convenient simulation, perpetually anchored by errors. Errors make you “snap” back to reality. They keep you tethered. Without the errors, you would drift off like a lonely balloon over a parade. But if you took the errors too seriously, your perceptual experience would become jerky and cumbersome. You’d keep snapping back based on irrelevant errors, and you wouldn’t enjoy a smooth flow of experience.

You want your sensitivity to errors to reflect current demands. That is, you want to be interrupted by errors when they are relevant to you and you want them to be filtered out when they are irrelevant. In order to accomplish this, you need the help of a biochemical quartet.

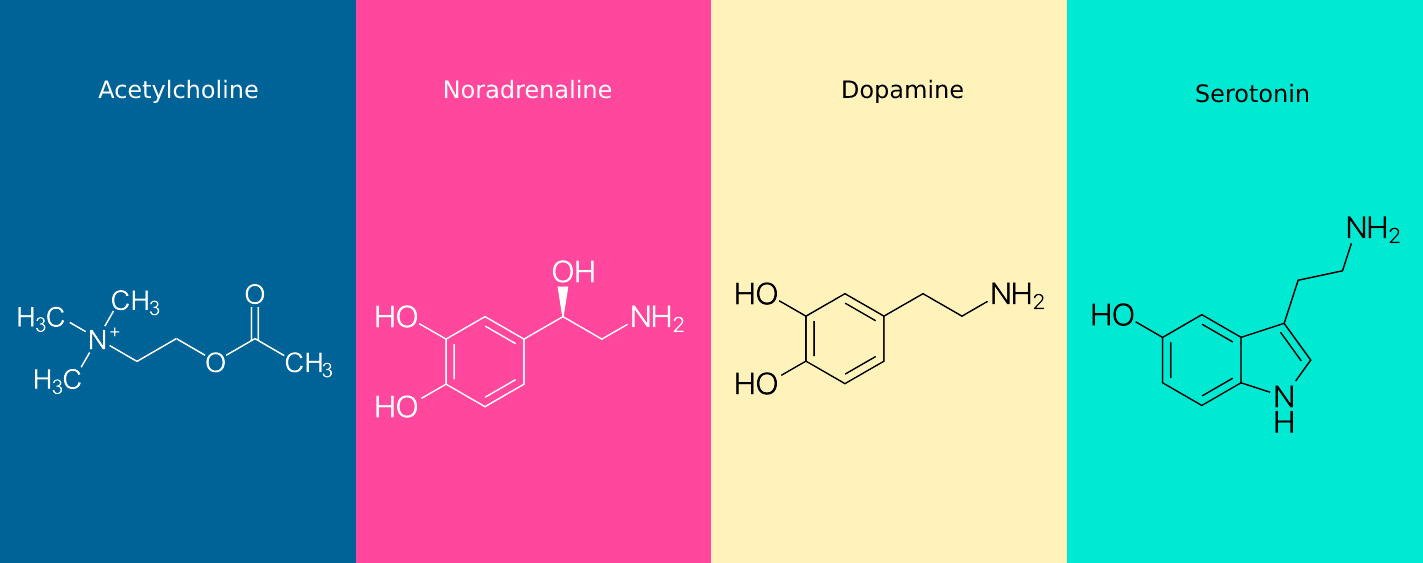

If the predictive processing framework is correct, neuromodulators are the biochemical substrates determining the rigor of your sensory experience. There’s a main party of four worthy of our attention: acetylcholine, noradrenaline, dopamine, and serotonin. Neuroscientist Karl Friston refers to their activity as controlling precision22. High precision corresponds to taking errors seriously, while low precision translates to the opposite. He further adds that there are times when precision should be high and times when it should be low. It should be high when errors are likely to be accurate, and low when they are likely to be wrong.

Drugs that diminish the effect of acetylcholine23 in your brain are known to result in hallucination. It’s not pleasant. As if in a dream, you are kept adrift in a realm of imagination. You’re in a bar, talking to your friend. You reach over to grab the telephone, which is now a snake. Your friend, now your high-school math teacher, admonishes you for failing your exam. You look at the snake, which is now a pen. It’s a mess. And that’s what happens when you let go of whatever is keeping you tethered to reality: you drift, randomly, through a perceptual illusion of your own making.

Drugs that do the opposite are known to enhance cognitive flexibility, and are popular as nootropics—smart drugs. Patients with Alzheimer’s and schizophrenia are routinely treated with acetylcholine-enhancing drugs precisely because it helps tether them to the world around them, from which they tend to drift.

For a long time, noradrenaline24 was associated with the notions of vigor and arousal. There was a sense that attention could be sharp or dull and that noradrenaline seemed to be involved in regulating it accordingly. Contemporary accounts paint a more nuanced picture. Low levels of noradrenaline reflect an environment where there’s little demand for cognitive control. High levels, on the other hand, may reflect a situation where the demands are greater than your capacity to meet them. Which means there’s a peculiar sweet spot wedged between the two extremes—the Goldilock’s zone—to which you are continuously drawn. This picture fits well with the idea of precision. Low precision means that errors are negligible; there’s no need to snap back to reality on account of them. High precision means that errors are tracked with vigor, reflecting concentrated effort and engagement.

Dopamine25 is in a class of its own. Friston has eschewed the idea that it has anything to with the concept of reward, arguing that it’s fundamentally pointless. Yet, it’s probably best to retain it however pointless it may be at a higher level of abstraction. The reward prediction error (RPE) account of dopamine states that the activity of dopamine neurons reflect the discrepancy between predicted and observed reward. As such, it fits neatly into the predictive processing paradigm. At low levels, the need to bridge this gap is accordingly low. At high levels, it becomes an obsession. From an outside perspective, we say that people are motivated or unmotivated. But in terms of neurobiology, we should rather say that some people are compelled to bridge the gap between their predictions and observations while others are not. At least not to the same extent. This results in an interesting possibility: some people may be attempting to fulfill maladaptive expectations and as such are trapped in a prison of their own beliefs.

Serotonin is uniquely complicated. Yet, recent work has found for it a role that fits the theme. Robin Carhart-Harris and David Nutt have suggested that we focus on two of its main receptors26. One of them seems to promote passive maintenance, while the other seems to promote active growth. They describe each as strategies for coping with stress. You can keep calm and carry on, taking it all in stride, or you can make a change. If we think of stress in terms of errors, we could say that there are two ways of dealing with it. You can imagine that the stress is an unavoidable consequence of living, in which case you’ll just have to deal with it. Or you can imagine that there’s something you can do to keep up. You can regain control and overcome your frustration, reinventing yourself in the process. This latter part seems to be literally true. Psychedelic substances, by virtue of activating a specific serotonin receptor, promote neural growth. Like a tree sprouting fresh branches, the synapses of your brain venture into the unknown on a search for a new way of being.

This party of four—acetylcholine, noradrenaline, dopamine, and serotonin—help you navigate the trade-off between stability and flexibility in a changing environment. They are the reason you can shrug errors off, or snap into focus at their appearance. They keep you attuned to the world around you and make sure you stay as rigid or flexible as you have to be in order to stay alive.

Waves of Predictions

Grunge-rocker Kurt Cobain once described his interaction with his audience as a process of feedback. He sent something out, and they responded in turn. Together, as waves crashing into each other, they emerged as a unified system: a living thing, life breathed into it by a process of recursion.

Neurons of your brain may be seen to be engaged in a similar process. Their waves are electric, but no less recursive. Higher-order and lower-order neurons are engaged in the same dance, taking turns as audience and grunge-rockers. We can imagine that there’s purpose to their dance: making sure that it keeps going. Errors and conflict are disturbances that hinders the ongoing process. They are the sign of potential death and decay. A gazelle ignoring an error today may be the feast of a lion tomorrow. If we don’t adapt to the changes around us—if we stop dancing—it’s only a matter of time before our failure becomes our undoing.

Prediction is a necessity because we need to stay ahead of the curve. Change is often described in terms of water. The tides of history wash over us and if we can’t anticipate them, we will be crushed their weight. The Great Wave Off Kanagawa represents this anxiety well:

Painted in the time of rapid change, it represents two extremes: the fluid and destructive force of a major wave, and the enduring stability of Mount Fuji. Those are the two extremes that we must all navigate. We need stability, or else we will fall apart. We need flexibility, or else we will be destroyed by unexpected events.

Philosopher Andy Clark titled his book on predictive processing Surfing Uncertainty27. It’s a great metaphor. That’s precisely what is needed of us: we must surf the chaotic waves surrounding us or succumb to their might.

Computer scientist Judea Pearl has also landed on this fluid metaphor with his Belief Propagation (BP) algorithm28. We can think of beliefs as waves rippling across a pond. As they crash into each other, we learn something. Like with Cobain and his audience, this circular exchange results in synchronization. When done properly, belief propagation results in your beliefs synchronizing with reality.

BP is a Bayesian algorithm and is as such closely related to predictive processing. What the neurons of our brain are doing is in a sense a process of belief propagation. Waves of predictions attempt to get ahead of a stream of sensory input, electrically propagating across a vast network of synapses. Crackling excitation and suppressive waves of inhibition balance each other out such that we are able to surf the edge of chaos.

What happens when this process fails?

Disorders of Prediction

The predictive processing framework is useful because it helps you make sense of phenomena relating to mind and behavior. Disorders can be understood as a dysregulation of predictive processing. Are errors being given too much or too little precision? Is the current predictive model too rigid or too flexible? These are the kinds of questions researchers are asking themselves, and it’s giving them a fresh look at old disorders.

Autism29

What would happen if you placed too much weight on the precision of sensory prediction errors? Your movements and way of talking would become clumsy. You’d be easily overwhelmed by surprises. You might also end up with highly complex models of reality, as you wouldn’t dare to ignore errors. Socially, you’d suffer. Most people enjoy a relatively high tolerance of sensory prediction errors, and as such they pepper their language with metaphors and ambiguous turns of phrases. To someone who demands high precision, the imprecise nature of social interaction is bound to be confusing.

According to the predictive processing interpretation, this is potentially what autism is all about.

Schizophrenia30

A peculiar aspect of schizophrenia is that its sufferers seem to have a hard time figuring out where information is coming from. They find it difficult to tell apart internal and external sources and as such end up confusing the two. They may believe that thoughts are beamed into their heads, which is a rational explanation to hearing voices in their heads that they can’t tell are their own.

It has been suggested that schizophrenia results from a failure to properly navigate the trade-off between stability and flexibility. During a state of heightened plasticity, they let their old and stable model of the world get washed over by new sensory experience. They overwrite their own progress in making sense of the world, losing themselves in the process. In later stages their noisy model becomes stabilized and their behavior predictably erratic.

Once surfing the edge of chaos, they are plunged into its depths.

This is, perhaps, the reason why they are impervious to the hollow mask illusion: their adaptive perceptual filter is weakened and as such they fail to make sense of the world. It should be noted that drunk people also tend to ‘miss’ the hollow mask illusion. Drunkenness can charitably be described as a state of reduced constraints and inhibitions—drunk people exhibit relativity unfiltered action and perception.

Depression31

What happens if there’s a pessimistic bias to one’s predictions? You would expect disappointing outcomes and discount success. Depression might be the result of a downwards spiral of a self-fulfilling prophesy of failure. When you expect failure, the mere observation of success becomes an error. A person with depression tends to isolate themselves such that their pessimistic predictions come true. And as they avoid situations where they can be proven wrong, they also avoid opportunities for their pessimistic predictions to be rejected.

Anxiety

It has been observed that anxiety tends to result in depression but that depression doesn’t tend to result in anxiety. Which raises an interesting question: is depression a state that is often arrived at following bouts of anxiety?

It makes sense of the face of it. Sufferers of anxiety expect bad things to happen. They predict embarrassment and catastrophe and all kinds of horrible outcomes: they tend to expect the worst. Anxiety treatment often involves exposure therapy. If you confront their expectations with situations where they can be tested, the outcome is usually that their expectations are violated. Their predictions were simply wrong. They didn’t wash their hands 60 times, yet disaster didn’t strike. They acted like a fool in public, yet people didn’t really seem to care.

Falsification is a useful strategy in science. If the brain is like a scientist, it’s not surprising that it should benefit from the same strategy.

ADHD

In the case of ADHD, we know that treatments that enhance dopamine activity tend to ameliorate symptoms. Which leaves us with an obvious interpretation: its sufferers tend to do whatever they expect to provide the most immediate reward regardless of feedback errors. Their impulsiveness, then, is a consequence of their inability to keep their predictions in check. Their impulses are continuously converted to concrete actions. As such, they tend to fidget and have a difficult time ignoring distractions.

It’s difficult to give them orders, because it’s difficult for them to care about feedback. This includes the sort of feedback one gives oneself. Clinical psychologist Russell Barkeley has, accordingly, referred to ADHD as a disorder of intention rather than attention32. Controlling themselves means being receptive to internal feedback and to them that’s a struggle.

These brief sketches of various disorders are meant to give you a taste of the clarity offered by the predictive processing perspective. That’s the reason so many scientists have been blown away by it: it helps make sense of things. It’s simply a very good explanation. And good explanations are quite irresistible to predictive minds.

Closing Thoughts

This has been a relatively brief journey into the world of predictive processing. I encourage you to check out the various sources scattered around this article. If a certain topic interested you, dive in.

Also feel free to check out the Predictive Processing subreddit for news and discussion.

References and Further Reading:

Dennett, D. C., & Dennett, D. C. (1996). Darwin's Dangerous Idea: Evolution and the Meanins of Life.

This is the search query I used: "predictive processing" OR "predictive coding" OR "Bayesian brain" OR "free energy principle" OR "active inference".

Clark, A. (2013). Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behavioral and brain sciences, 36(3), 181-204.

Friston, K., FitzGerald, T., Rigoli, F., Schwartenbeck, P., & Pezzulo, G. (2017). Active inference: a process theory. Neural computation, 29(1), 1-49.

Friston, K. (2010). The free-energy principle: a unified brain theory? Nature reviews neuroscience, 11(2), 127-138.

The Mind-Expanding Ideas of Andy Clark (The New Yorker).

Felleman, D. J., & Van Essen, D. C. (1991). Distributed hierarchical processing in the primate cerebral cortex. Cerebral cortex (New York, NY: 1991), 1(1), 1-47.

Gregory, R. L. (1980). Perceptions as hypotheses. Philosophical Transactions of the Royal Society of London. B, Biological Sciences, 290(1038), 181-197.

This speech segment originates from a Radiolab podcast episode with Diane Deutsch.

Kidd, C., Piantadosi, S. T., & Aslin, R. N. (2012). The Goldilocks effect: Human infants allocate attention to visual sequences that are neither too simple nor too complex. PloS one, 7(5), e36399.

MacKay, D. M. (1956). Towards an information‐flow model of human behaviour. British Journal of Psychology, 47(1), 30-43.

Glimcher, P. W. (2003). Decisions, uncertainty, and the brain: The science of neuroeconomics. MIT press.

Sterling, P. (2012). Allostasis: a model of predictive regulation. Physiology & behavior, 106(1), 5-15.

Predicting green: really radical (plant) predictive processing | Journal of The Royal Society Interface. (2017). Journal of the Royal Society Interface. https://royalsocietypublishing.org/doi/10.1098/rsif.2017.0096

Barrett, L. F. (2017). How emotions are made: The secret life of the brain. Houghton Mifflin Harcourt.

From a page of aphorisms attributed to W. Ross Ashby.

Hawkins, J., & Blakeslee, S. (2004). On intelligence. Macmillan.

Blom, T., Feuerriegel, D., Johnson, P., Bode, S., & Hogendoorn, H. (2020). Predictions drive neural representations of visual events ahead of incoming sensory information. Proceedings of the National Academy of Sciences, 117(13), 7510–7515. https://doi.org/10.1073/pnas.1917777117

Friston, K. J., Stephan, K. E., Montague, R., & Dolan, R. J. (2014). Computational psychiatry: the brain as a phantastic organ. The Lancet Psychiatry, 1(2), 148-158.

Moran, R. J., Campo, P., Symmonds, M., Stephan, K. E., Dolan, R. J., & Friston, K. J. (2013). Free energy, precision and learning: the role of cholinergic neuromodulation. Journal of Neuroscience, 33(19), 8227-8236.

Sales, A. C., Friston, K. J., Jones, M. W., Pickering, A. E., & Moran, R. J. (2019). Locus Coeruleus tracking of prediction errors optimises cognitive flexibility: An Active Inference model. PLoS computational biology, 15(1), e1006267.

Friston, K., Schwartenbeck, P., FitzGerald, T., Moutoussis, M., Behrens, T., & Dolan, R. J. (2014). The anatomy of choice: dopamine and decision-making. Philosophical Transactions of the Royal Society B: Biological Sciences, 369(1655), 20130481.

Carhart-Harris, R. L., & Nutt, D. J. (2017). Serotonin and brain function: a tale of two receptors. Journal of Psychopharmacology, 31(9), 1091-1120.

Clark, A. (2015). Surfing Uncertainty: Prediction, Action, and the Embodied Mind. Oxford University Press.

Pearl, J., & Mackenzie, D. (2018). The book of why: the new science of cause and effect. Basic books.

Palmer, C. J., Lawson, R. P., & Hohwy, J. (2017). Bayesian approaches to autism: Towards volatility, action, and behavior. Psychological Bulletin, 143(5), 521–542. https://doi.org/10.1037/bul0000097

Griffin, J. D., & Fletcher, P. C. (2017). Predictive Processing, Source Monitoring, and Psychosis. Annual Review of Clinical Psychology, 13(1), 265–289. https://doi.org/10.1146/annurev-clinpsy-032816-045145

Kube, T., Schwarting, R., Rozenkrantz, L., Glombiewski, J. A., & Rief, W. (2020). Distorted Cognitive Processes in Major Depression: A Predictive Processing Perspective. Biological Psychiatry, 87(5), 388–398. https://doi.org/10.1016/j.biopsych.2019.07.017

Barkley, R. A. (1997). ADHD and the nature of self-control. Guilford press.